Software development moves at a rapid pace today, requiring teams to release updates quickly while maintaining high quality standards. A robust strategy for software testing and automation helps you catch critical bugs before they reach your customers. You can achieve this balance by combining human insight with powerful test automation to ensure a seamless user experience. By integrating these practices, developers can focus on building features rather than constantly fixing regressions in the code. In an era where user expectations are at an all-time high, the ability to deliver flawless software is a significant competitive advantage for any organization.

Let us look at how modern teams build reliable applications through comprehensive software testing. We will explore the exact methods industry leaders use to ship code with confidence and precision. Understanding the testing process forms the foundation of reliable product releases and long-term project success. Every automated test you create contributes to a more stable and predictable deployment cycle for your entire team. Furthermore, a well-structured approach to quality assurance reduces the technical debt that often accumulates during rapid development cycles.

The evolution of digital products necessitates a more sophisticated approach to quality than ever before. As applications become more interconnected through APIs and microservices, the surface area for potential failures expands exponentially. Implementing a rigorous software testing and automation protocol ensures that these complex interactions are verified continuously. This proactive stance on quality not only protects the brand’s reputation but also optimizes the overall development budget by reducing emergency hotfixes.

At its heart, a software test is a controlled procedure used to evaluate the quality and functionality of an application. This process ensures that the software meets the specified requirements and behaves as expected under various conditions. While manual testing remains essential for usability, modern teams increasingly rely on test automation to handle repetitive and high-volume tasks. A well-defined software test provides the necessary evidence that a feature is ready for production use within the software development lifecycle.

The primary goal of software testing is to identify defects as early as possible in the development lifecycle. By catching bugs early, teams can reduce the cost of repairs and prevent negative impacts on the end-user experience. Every software test should be designed with a clear objective and expected outcome to ensure maximum effectiveness. This systematic approach allows developers to maintain a high standard of quality throughout the entire project duration, fostering trust with stakeholders.

In addition to finding bugs, testing serves as a validation of the business logic and user requirements. It provides a documented trail of how the system should behave, which is invaluable for onboarding new team members. When a testing tool is used effectively, it can generate reports that highlight areas of risk and guide future development priorities. Ultimately, the foundation of any successful software project is a commitment to rigorous and repeatable verification processes.

When you perform manual testing, you are using human intuition to explore the application and find unexpected behaviors. This method is particularly useful for assessing the visual appeal and overall user journey of a new product. However, relying solely on a manual test can become a bottleneck as the application grows in complexity and size. Therefore, a balanced approach that incorporates both manual and automated methods is usually the most effective strategy for modern engineering teams.

Test Automation: The Shift to Test Automation

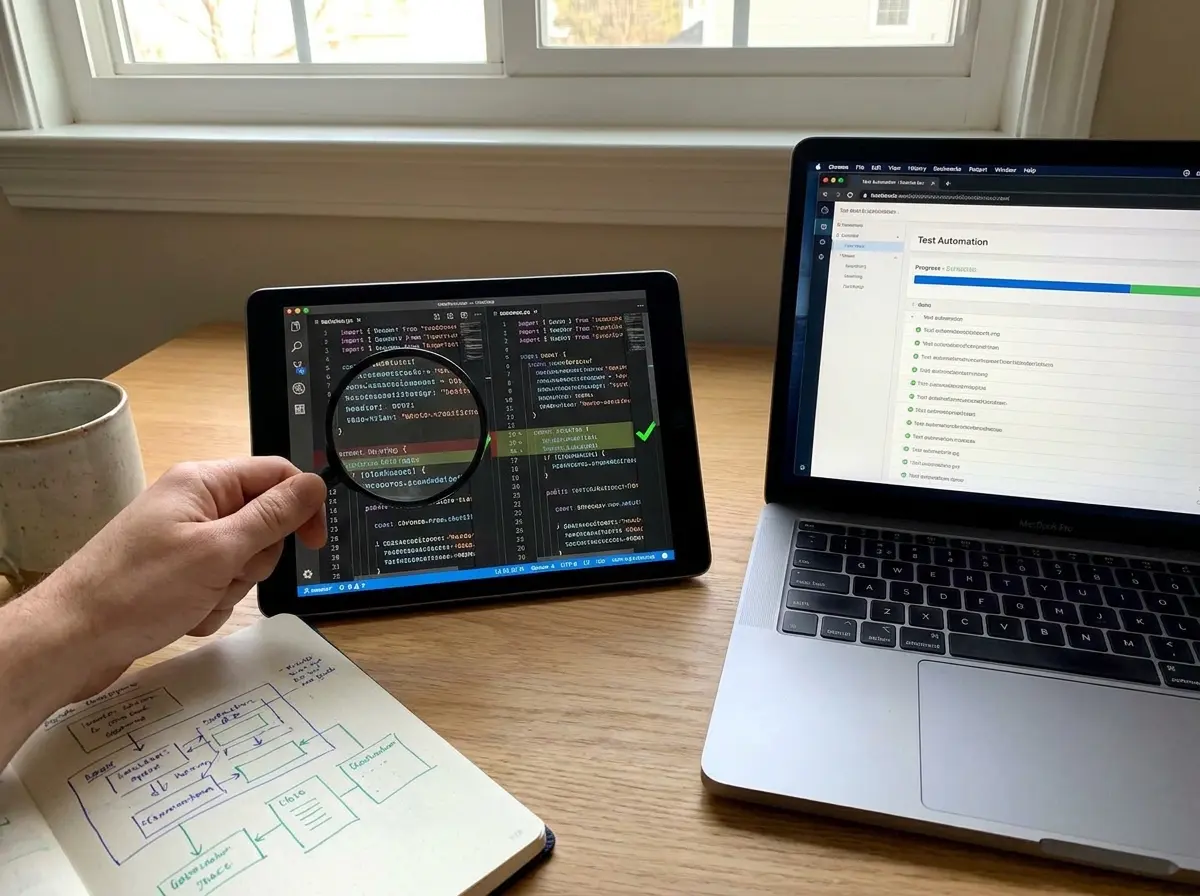

The transition toward test automation has revolutionized how software is built and delivered in the modern era. By using automated testing, teams can execute hundreds of checks in a fraction of the time it takes manually. This shift allows for more frequent releases and a much faster feedback loop between developers and quality assurance specialists. Implementing test automation is no longer a luxury but a necessity for any team aiming for high velocity and consistent reliability.

One of the biggest advantages of automated testing is its ability to run consistently without the risk of human error. An automated test will perform the exact same steps every time, ensuring that existing functionality remains intact after changes. This reliability is crucial when managing a large codebase where a single change can have many unintended side effects. Consequently, test automation provides a safety net that empowers developers to innovate and refactor code more freely without fear of breakage.

Integrating test automation into your ci/cd pipeline ensures that every code commit is automatically verified. This practice helps maintain a high level of quality by preventing broken code from reaching the main branch. When automated tests fail, the team is notified immediately, allowing for rapid resolution of the underlying issue before it compounds. This continuous approach to automated testing is a cornerstone of modern DevOps and agile development methodologies that prioritize speed and stability.

Furthermore, the ROI of automated tests becomes increasingly apparent as the project matures and the regression suite grows. While the initial setup requires time and resources, the long-term savings in manual effort are substantial. Teams can redirect their manual testing efforts toward high-value activities like exploratory testing and user experience research. This shift not only improves software quality but also enhances the overall job satisfaction of the QA team by removing mundane tasks.

Testing Type: Essential Regression Testing and More

Choosing the right testing type for each scenario is critical for building a comprehensive and effective quality assurance strategy. Different types of tests focus on various aspects of the application, from individual functions to the entire user experience. For instance, regression testing ensures that new code changes do not break features that were previously working correctly. By categorizing your efforts, you can ensure that no part of the system is left unverified during the deployment process.

Another vital component is integration testing, which verifies that different modules or services within the application work together seamlessly. This is especially important in microservices architectures where many independent components must communicate over a network or through APIs. Similarly, api testing focuses on validating the endpoints that power your application’s data exchange and business logic. Both integration testing and api testing are essential for maintaining the structural integrity of complex software systems in a production environment.

Beyond these, functional testing checks the software against the business requirements to ensure it performs the tasks it was designed for. You might also implement integration tests to check the data flow between the database and the user interface. For a complete view, end-to-end tests simulate a real user’s journey through the entire application from start to finish. Each testing type plays a unique role in creating a robust and reliable product for your customers, and regression testing should be performed after every major update.

Smoke testing and sanity testing are also critical subsets of your strategy that provide quick validation of build health. A smoke test verifies that the most critical functions of the application work, allowing the team to decide if further testing is warranted. Sanity testing, on the other hand, focuses on a specific functional area to ensure that bugs have been fixed as intended. Together, these testing types form a multi-layered defense against software defects and performance regressions.

Manual Test vs Automated Test: Balancing Manual and Automated Methods

Deciding when to use a manual test versus an automated test is a strategic choice that affects your team’s efficiency. A manual test is ideal for exploratory testing, where a tester uses their creativity to find bugs that scripts might miss. This human-centric approach is also necessary for accessibility testing and evaluating the overall “feel” of the user interface. However, for repetitive tasks like checking login forms or data entry, an automated test is far more efficient and cost-effective.

The goal is to use test automation for the “boring” and repetitive parts of the process, freeing up humans for high-value work. While an automated test can check if a button works, it cannot tell you if the button’s placement is confusing to users. Therefore, manual testing provides the qualitative insights that automated testing simply cannot replicate through code alone. Balancing these two methods ensures that you achieve both high technical quality and a great user experience across all platforms.

In a typical automation test scenario, you might automate 80% of your test cases while leaving the remaining 20% for manual review. This ratio allows you to maintain a fast test execution speed while still benefiting from human oversight and critical thinking. Every manual test should be documented as a test case to ensure consistency and provide a clear path for future automation. By strategically choosing between a manual test and an automated test, you optimize your resources for the best possible outcome.

A helpful way to visualize this balance is through the “Testing Pyramid” model, which suggests a large base of unit tests, a middle layer of service tests, and a small top layer of UI tests. This structure ensures that the majority of your test case volume is fast and reliable, while the slower manual and UI tests are used sparingly. By following this model, teams can avoid the “ice cream cone” anti-pattern, where too much reliance is placed on slow, brittle UI-level automation. A well-balanced test case library is the key to sustainable quality assurance.

Test Automation Tool: How to Build a Test Automation Framework

To succeed with test automation, you need a solid framework that supports the creation and maintenance of your test suite. A test automation tool provides the structure and libraries needed to write a test script that interacts with your application. A good framework should be modular, allowing you to reuse code across different test cases to save time and effort. This organization is key to scaling your automation testing efforts as the project grows in size and complexity.

Managing test data is another critical aspect of building a reliable and repeatable automation test framework. Your test scripts should be able to set up and tear down the environment to ensure each test runs in a clean state. This prevents “flaky tests” that fail due to leftover data from a previous automated test run rather than actual bugs. By investing in a robust test automation tool, you create a foundation that supports long-term quality and stability for the entire organization.

When organizing your test suite, you might assign a specific label label to each test case to categorize them by priority or feature. This makes it easier to run a subset of tests, such as a smoke test suite, during the early stages of a build. Well-written test scripts are easy to read and maintain, ensuring that other team members can contribute to the test automation effort. A strong framework is the backbone of any successful automated software testing initiative in a professional environment.

Modern frameworks often utilize the Page Object Model (POM) to enhance maintainability and reduce code duplication. By creating a separate class for each page of the application, you can isolate the UI elements from the test logic itself. This means that if a button’s ID changes, you only need to update it in one place rather than in every test case that uses it. This level of abstraction is essential for managing large-scale automated software testing projects without becoming overwhelmed by maintenance tasks.

Automation Tool: Choosing the Right Testing Tool

Selecting the right automation tool depends on your application’s technology stack and your team’s technical expertise. There are many testing tools available, ranging from open-source libraries like Selenium to comprehensive commercial platforms. A high-quality testing tool should offer good documentation, a strong community, and support for the browsers or devices your users prefer. Choosing the wrong automation tool can lead to high maintenance costs and slow development cycles that hinder your progress.

Many teams prefer automation tools that integrate easily with their existing development environment and ci/cd pipeline. For web applications, Selenium and Cypress are popular choices that offer powerful features for automation testing. If you are working on mobile apps, you might look for testing tools like Appium that support both iOS and Android platforms. The right automation tool will feel like a natural extension of your workflow rather than a separate, burdensome task for your developers.

When evaluating a testing tool, consider how easily it handles dynamic elements and complex user interactions within your application. Some automation tools offer “low-code” options that allow non-developers to contribute to the test automation process effectively. However, for complex logic, a code-based automation tool usually provides the most flexibility and power for creating a sophisticated automated test. Ultimately, the best testing tools are those that help your team deliver bug-free software with minimal friction and maximum confidence.

It is also important to consider the total cost of ownership when selecting a testing tool, including licensing fees and training time. Open-source tools are free to use but may require more technical skill to set up and maintain over time. Commercial tools often provide better support and out-of-the-box integrations but come with a recurring financial commitment. Your choice of testing tool should align with your long-term business goals and the specific technical requirements of your software product.

Automation Testing: Advanced Automation Test Strategies

Once you have the basics down, you can explore advanced automation testing strategies to further improve your software quality. This might include performance testing to ensure your application can handle a high number of concurrent users without slowing down. You can also implement automated software testing for security vulnerabilities to protect your users’ data from potential threats. These advanced methods help you build a more resilient and professional automated software product for the global market.

Another advanced strategy is end-to-end testing, which validates the entire system flow from the database to the front-end user interface. While these tests are more complex to maintain, they provide the highest level of confidence that the system works as a whole. You might also use performance tests to identify bottlenecks in your code before they become a problem for your customers. Every automation test you add to your strategy should provide clear value and help you achieve your quality goals efficiently.

Implementing automated software testing at scale requires a cloud-based infrastructure to run many tests in parallel. This significantly reduces the time required for a full test execution, allowing for even faster feedback loops in your development process. By using automated software to manage your testing environment, you can ensure consistency across different operating systems and browser versions. Advanced automation testing is about moving beyond simple checks to create a comprehensive safety net for your entire application ecosystem.

Behavior-Driven Development (BDD) and Test-Driven Development (TDD) are two methodologies that can elevate your automation test strategy. BDD encourages collaboration between developers, QA, and business stakeholders by writing tests in plain language. TDD, on the other hand, involves writing a unit test before the actual code is written, ensuring that every feature is born with verification. These strategies shift the focus from finding bugs to preventing them, which is the ultimate goal of any software testing and automation program.

Test Suite: Measuring Your Testing Success

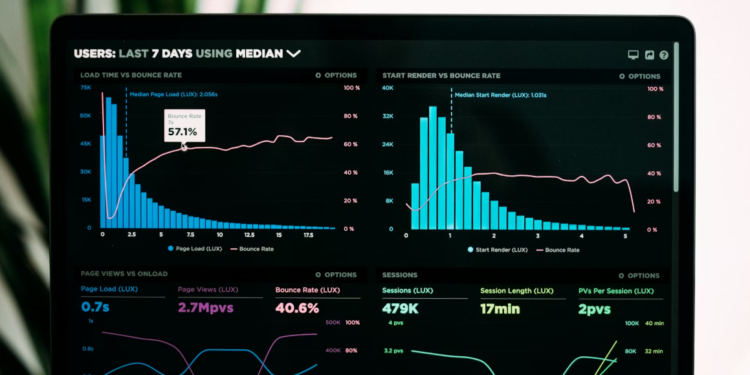

To know if your test automation efforts are paying off, you must measure the success of your test suite using key performance indicators. One of the most common metrics is test coverage, which indicates what percentage of your code is exercised by your test cases. While 100% test coverage is rarely achievable or necessary, it provides a useful benchmark for identifying untested areas of your application. A well-maintained test suite is a reflection of a team’s commitment to long-term software quality and operational excellence.

Another important metric is the feedback loop time, or how long it takes for a developer to receive results after a test execution. A fast feedback loop allows developers to fix issues while the code is still fresh in their minds, improving overall productivity and morale. You should also track the number of bugs found in production versus those caught by your automated testing process. If your test suite is catching most issues before release, your test automation strategy is likely working effectively to protect your users.

Regularly reviewing your test cases helps you identify and remove redundant or obsolete tests that no longer provide value to the project. This maintenance ensures that your test suite remains fast and relevant as the application evolves over time. By analyzing the results of every automation test, you can gain insights into the most fragile parts of your codebase. Measuring success is not just about numbers; it is about building a culture of quality where software testing is valued by everyone on the team.

Mean Time to Detect (MTTD) and Mean Time to Recovery (MTTR) are also vital metrics for evaluating your software testing and automation maturity. MTTD measures how quickly your test cases identify a defect after it has been introduced into the environment. MTTR tracks how long it takes the team to resolve the issue and return the system to a stable state. By optimizing these metrics, you can ensure that your automated tests are providing the maximum possible protection for your production environment.

Unit Test: The Role of Unit Testing in Quality

A unit test is the smallest and most fundamental testing type in a modern software development strategy. It focuses on verifying the logic of a single function or method in isolation from the rest of the system. By writing a unit test for every small piece of logic, developers can catch errors at the very moment they are introduced. This practice of unit testing is essential for building a stable foundation for more complex automated testing efforts later in the cycle.

Because they are small and isolated, unit tests run extremely fast, providing nearly instant feedback to the developer during the coding process. This speed makes unit testing an ideal candidate for running locally on a developer’s machine before they even commit their code to the repository. A comprehensive set of unit tests acts as living documentation, explaining how individual parts of the system are intended to behave. Every unit test you write today saves time and effort in the future by preventing regressions in core logic.

To make unit testing more effective, developers often use mocking and stubbing to simulate external dependencies like databases or web services. This ensures that the unit test remains focused on the specific logic being tested rather than external factors. When combined with a testing tool that measures code coverage, unit testing provides a high level of confidence in the building blocks of your application. It is the first line of defense in a comprehensive software testing and automation strategy.

AI-Powered Test: Future Trends: AI and Beyond

The future of software testing and automation is being shaped by artificial intelligence and machine learning technologies. An ai-powered test can automatically adapt to changes in the user interface, reducing the need for manual test script updates. This innovation significantly improves the developer experience by reducing the maintenance burden associated with traditional test automation. As these tools evolve, we can expect automated tests to become even more intelligent and self-healing over time.

AI can also help in generating a new test case based on actual user behavior patterns observed in production environments. This ensures that your automation testing efforts are focused on the paths that real users actually take through your application. By leveraging an ai-powered test, teams can identify edge cases that might have been overlooked during the initial design phase. The goal is to create a more efficient and effective automated software testing process that scales with the speed of modern digital business.

Another emerging trend is “Shift Left” testing, where automated tests are integrated even earlier in the development process. By moving testing closer to the initial design and coding phases, teams can identify architectural flaws before they become deeply embedded. AI-powered testing tools are making this possible by providing real-time suggestions and vulnerability scans as developers write code. This proactive approach to software testing and automation is set to become the standard for high-performing engineering organizations.

Manual Testing: Overcoming Common Automation Challenges

Despite its many benefits, test automation comes with its own set of challenges that teams must navigate carefully. One common issue is the need for human intervention when tests fail due to environmental issues rather than actual bugs. This can lead to “test fatigue” where team members begin to ignore automated testing results because they are frequently inaccurate. To overcome this, it is important to treat your test scripts with the same care and quality as your production code to ensure reliability.

Another challenge is managing the testing tasks that are difficult to automate, such as complex visual layouts or physical hardware interactions. In these cases, manual testing is often the more practical and reliable choice for ensuring a high-quality user experience. You must also ensure that your automation test suite does not become so large that it slows down your development cycle. Balancing the scope of your test automation is key to maintaining a fast and efficient workflow for the entire engineering team.

Flaky tests—tests that pass or fail inconsistently without any code changes—are perhaps the most significant hurdle in automated software testing. These are often caused by timing issues, network latency, or unstable test environments. Addressing flakiness requires a dedicated effort to stabilize the infrastructure and refine the test script logic. By prioritizing test stability over test quantity, you can build a test suite that the team actually trusts and relies on for daily deployments.

Conclusion

Mastering software testing and automation is a journey that requires continuous learning and adaptation to new technologies. By combining a strong software test foundation with modern test automation, you can deliver high-quality software at a rapid pace. Remember that the goal is not just to automate everything, but to automate the right things to support your team’s long-term success. With the right testing tools and a clear strategy, you can build bug-free software that delights your customers and stands the test of time in a competitive market.

As you refine your approach, continue to monitor your metrics and adjust your test cases to reflect the evolving needs of your users. The landscape of software testing is always changing, but the core principles of accuracy, reliability, and speed remain constant. By fostering a culture that values quality at every stage, you ensure that your automated tests provide a solid foundation for innovation. Ultimately, a commitment to excellence in software testing and automation is a commitment to the success of your product and your users.